Selenium is a great tool of web scraping, but has some flaws which is normal because it was designed primarily for testing online applications. However, BeautifulSoup was created particularly for web scraping and is also an excellent tool.

But even BeautifulSoup has its own flaws as when data to be scraped is behind the wall and it requires user authentication or some other actions from user.

This is where Selenium may be used to automate user interactions with the website, and Beautiful Soup will be used to scrape the data once we are in the wall.

When BeautifulSoup and Selenium are combined, you get a perfect web scraping tool. Selenium can also scrape data but BeautifulSoup is far better.

We will use BeautifulSoup and Selenium to scrape movie details from Amazon Prime Video in several categories, such as description, name, and ratings, and then filter the movies depending on the IMDB ratings.

Let’s discuss the process of scraping Amazon Prime data.

Firstly, import the necessary modules

from selenium import webdriver from selenium.webdriver.common.keys import Keys from bs4 import BeautifulSoup as soup from time import sleep from selenium.common.exceptions import NoSuchElementException import pandas as pd

Make three empty lists to keep track of the movie information.

movie_names = [] movie_descriptions = [] movie_ratings = []

Chrome Driver must be installed to work this program properly. Make sure you install the driver that relates to your browser version of chrome.

Now, define a function called open_site() that opens the sign-in page of Amazon Prime.

def open_site():

options = webdriver.ChromeOptions()

options.add_argument("--disable-notifiactions")

driver = webdriver.Chrome(executable_path='PATH/TO/YOUR/CHROME/DRIVER',options=options)

driver.get(r'https://www.amazon.com/ap/signin?accountStatusPolicy=P1&clientContext=261-1149697-3210253&language=en_US&openid.assoc_handle=amzn_prime_video_desktop_us&openid.claimed_id=http%3A%2F%2Fspecs.openid.net%2Fauth%2F2.0%2Fidentifier_select&openid.identity=http%3A%2F%2Fspecs.openid.net%2Fauth%2F2.0%2Fidentifier_select&openid.mode=checkid_setup&openid.ns=http%3A%2F%2Fspecs.openid.net%2Fauth%2F2.0&openid.ns.pape=http%3A%2F%2Fspecs.openid.net%2Fextensions%2Fpape%2F1.0&openid.pape.max_auth_age=0&openid.return_to=https%3A%2F%2Fwww.primevideo.com%2Fauth%2Freturn%2Fref%3Dav_auth_ap%3F_encoding%3DUTF8%26location%3D%252Fref%253Ddv_auth_ret')

sleep(5)

driver.find_element_by_id('ap_email').send_keys('ENTER YOUR EMAIL ID')

driver.find_element_by_id('ap_password').send_keys('ENTER YOUR PASSWORD',Keys.ENTER)

sleep(2)

search(driver)

Let's create a search() function that looks for the genre specified.

def search(driver):

driver.find_element_by_id('pv-search-nav').send_keys('Comedy Movies',Keys.ENTER)

last_height = driver.execute_script("return document.body.scrollHeight")

while True:

driver.execute_script("scrollTo(0, document.body.scrollHeight);")

sleep(5)

new_height = driver.execute_script("return document.body.scrollHeight")

if new_height == last_height:

Break

last_height = new_height

html = driver.page_source

Soup = soup(html,'lxml')

tiles = Soup.find_all('div',attrs={"class" : "av-hover-wrapper"})

for tile in tiles:

movie_name = tile.find('h1',attrs={"class" : "_1l3nhs tst-hover-title"})

movie_description = tile.find('p',attrs={"class" : "_36qUej _1TesgD tst-hover-synopsis"})

movie_rating = tile.find('span',attrs={"class" : "dv-grid-beard-info"})

rating = (movie_rating.span.text)

try:

if float(rating[-3:]) > 8.0 and float(rating[-3:]) < 10.0:

movie_descriptions.append(movie_description.text)

movie_ratings.append(movie_rating.span.text)

movie_names.append(movie_name.text)

print(movie_name.text, rating)

except ValueError:

Pass

The function searches genre and scrolls till the bottom of page because Amazon Prime Video scrolls endlessly as it uses JavaScript executor and then call driver to acquire page_source. This source is then utilized and feeded to BeautifulSoup.

To be sure that the if statement is looking for movies with a rating of more than 8.0 but less than 10.0.

Let's make a pandas data frame to hold all our movie information.

def dataFrame():

details = {

'Movie Name' : movie_names,

'Description' : movie_descriptions,

'Rating' : movie_ratings

}

data = pd.DataFrame.from_dict(details,orient='index')

data = data.transpose()

data.to_csv('Comedy.csv')

Now let’s try the function we already discussed

open_site()

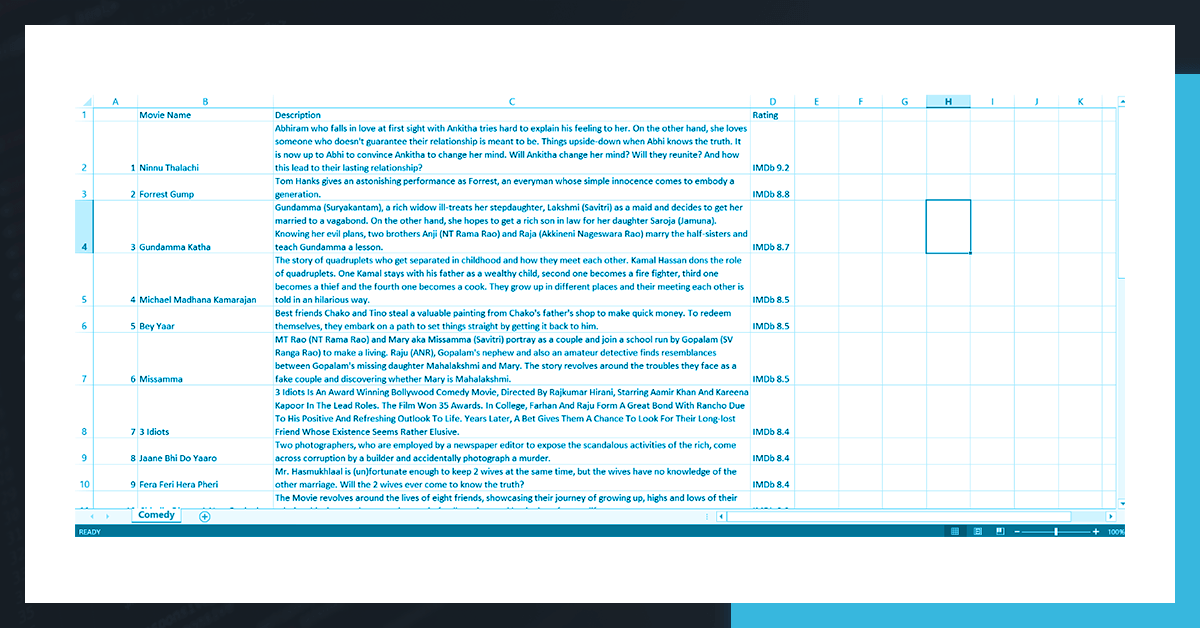

Result

The result you get will not look the same as here it is formatted such as Column width, text wrap, etc. otherwise it will look almost same.

Conclusion

Hence, BeautifulSoup and Selenium work well together and provides best results considering Amazon Prime Video but Python has other tools also like Scrapy and it is also equally strong.

Looking for best web scraping services to get Amazon Prime data? Contact Web Screen Scraping today!

Request for quote!